ARC-AGI-3

Interactive benchmark evaluating AI agents' reasoning skills through challenging gameplay.

Arcprize.orgFollow for updates & deals

Get alerts for ARC-AGI-3 discounts, feature releases & pricing changes

Similar Tools

What is ARC-AGI-3?

Welcome to ARC-AGI-3, an innovative and next-generation interactive reasoning benchmark that serves as a crucial bridge between current AI capabilities and the aspirations of Artificial General Intelligence (AGI). This cutting-edge tool is meticulously crafted to assess AI agents' proficiency in navigating complex reasoning tasks through engaging and thought-provoking gameplay.

The primary goals of ARC-AGI-3 are both clear and essential. It aims to identify the present capacities of AI, while simultaneously illuminating the gaps that lie between these current capabilities and the objectives required to achieve true AGI. By providing a platform for testing AI systems against real-world challenges, it encourages deeper inquiries into the evolutionary paths AI might take.

Engage with the Benchmark

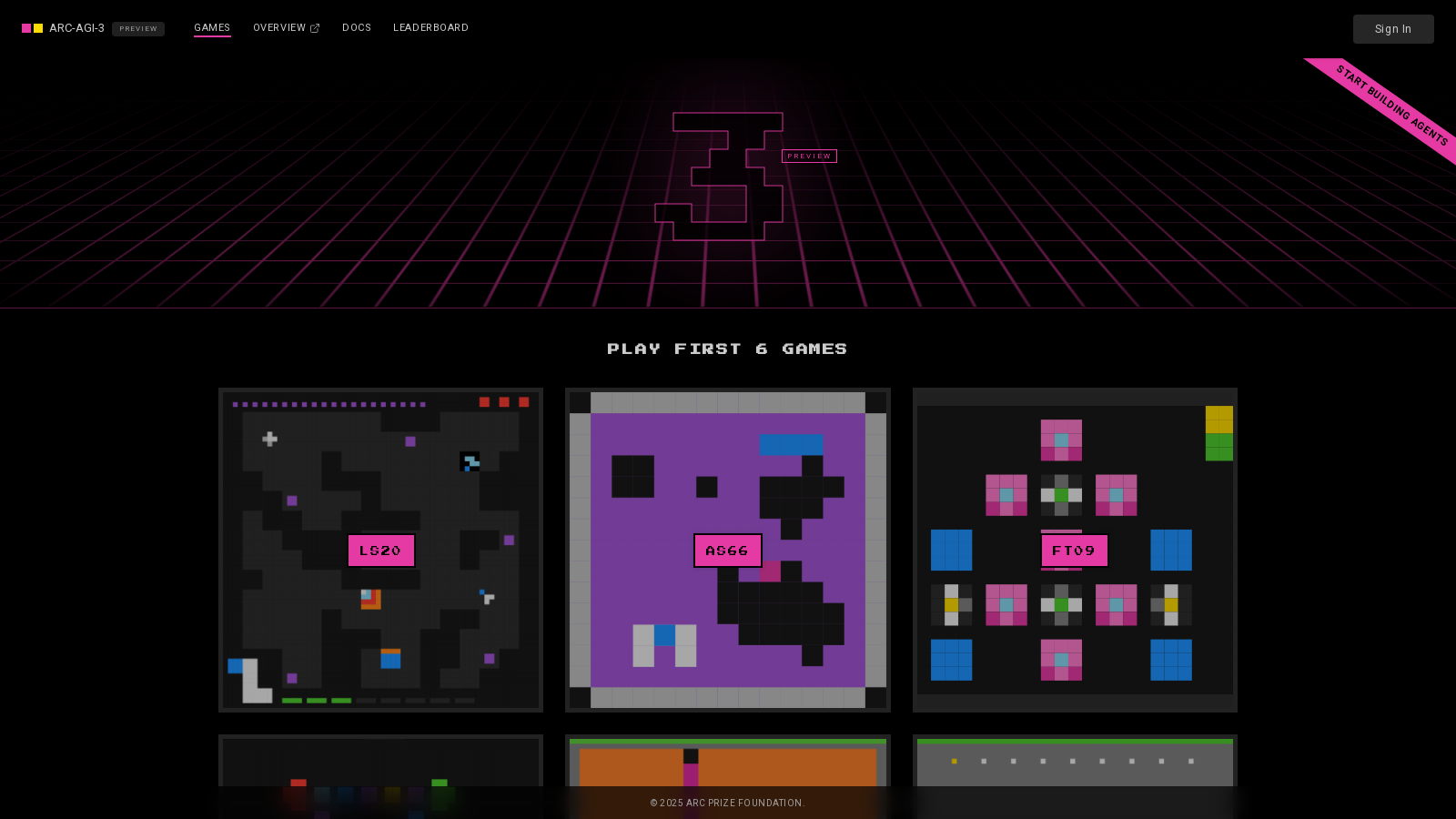

Users are encouraged to actively participate in the process of benchmarking the AI by testing their AI against prerelease games. Begin your journey by playing the initial three games – LS20, FT09, and VC33 – each designed to elicit specific reasoning skills from AI agents. These games are integral to testing how well agents can manage unpredictable scenarios and varying levels of complexity.

Understanding the Games

The games provide a structured environment that allows AI agents to respond fluently to evolving game states. For instance, LS20 concentrates on agent reasoning, FT09 challenges basic logic, and VC33 evaluates orchestrational abilities. Players will find themselves managing stateful game interactions, making decisions based on the AI's evolving performance, and adapting their strategies accordingly.

Features that Enhance Learning

A standout feature of ARC-AGI-3 is its open-source model, fostering transparency and collaboration within the research community. This community-driven approach invites contributions from a wide array of stakeholders, ensuring a diverse range of strategies and tools are employed to advance AI capabilities. The foundation aims to accelerate the development of AGI by creating benchmarks that push the boundaries of AI's potential.

Integration and Set-Up

To embark on your adventure with ARC-AGI-3, you can swiftly set up an environment conducive to running your AI agent. The setup process is straightforward, requiring the installation of necessary packages, cloning the repository, and configuring your API keys to launch your project. This ease of access ensures that anyone interested can dive in without significant obstacles.

Community Engagement and Feedback

At the ARC Prize Foundation, contributions are highly valued, and participant feedback is actively sought. By sharing results from gameplay, users play a pivotal role in refining the Benchmark and developing better metrics that more accurately gauge AI performance. This collaborative ethos fosters an innovative environment where fresh ideas can thrive.

A Vision for the Future

Ultimately, ARC-AGI-3 aspires to cultivate a future where AI not only exhibits efficiency but also possesses dynamic, adaptable problem-solving skills that mirror human intelligence. By collaborating with developers, researchers, and enthusiasts alike, ARC-AGI-3 is laying the groundwork for a deeper understanding and pursuit of authentic AGI, aligning with the urgent need to tackle humanity's most pressing challenges.

Pros & Cons

Pros

- Designed to measure AI agents' reasoning in innovative, interactive environments.

- Encourages community involvement by allowing users to test and provide feedback.

- Features a leaderboard to track both AI and human performance in games.

Cons

- Limited documentation may hinder new users from fully grasping the tool.

Frequently Asked Questions

ARC-AGI-3 is available at no cost.

According to our latest information, this tool does not seem to have a lifetime deal at the moment, unfortunately.

ARC-AGI-3 offers an interactive reasoning benchmark that assesses AI agents on their capabilities to explore, plan, and adapt in novel environments. Key features include multiple engaging games, a standardized action interface, scorecards to track agent performance, and the ability to orchestrate agent play across numerous games using swarms. This unique setup is designed to shed light on the capability gap between current AI and true Artificial General Intelligence (AGI).

To begin building an agent for ARC-AGI-3, follow these steps: First, install the UV tool. Next, clone the ARC-AGI-3-Agents repository from GitHub and navigate into the directory. Set up your environment variables by copying the sample .env file. You'll need to obtain your ARC_API_KEY after registering on the ARC-AGI-3 website. Finally, run your first agent against one of the available games, such as ls20, using the command: 'uv run main.py --agent=random --game=ls20'.

ARC-AGI-3 features several games, including ls20 (Agent reasoning), ft09 (Elementary Logic), and vc33 (Orchestration). Each game presents a turn-based 2D grid environment where agents can interact through a standardized action interface. Agents receive game state data in JSON format and respond with actions that move them through the game. The goal is to adapt and learn as the games intentionally lack detailed instructions, making player discovery an integral part of the experience.

Absolutely! Users are encouraged to contribute by testing their AI agents against pre-release games, providing valuable feedback, and sharing results with the community. This collaboration helps shape the evolution of the benchmark. You can also explore the documentation to understand the system better and provide suggestions for improvement.

Scorecards in ARC-AGI-3 track the performance of your agents during gameplay. Each scorecard aggregates results from an agent's performance and must be opened before a game begins. You can view your scorecard online after gameplay to analyze your agent's performance, including scores and actions taken. Scorecards will auto-close after 15 minutes, and results are added to the leaderboard periodically.

To run an agent in ARC-AGI-3, ensure you have Python installed along with the necessary dependencies from the ARC-AGI-3-Agents repository. Additionally, you must obtain an ARC_API_KEY by registering on the ARC-AGI-3 website. Depending on your setup, ensure you have sufficient computational resources, especially if you're planning to run multiple agents or swarms simultaneously.

While ARC-AGI-3 is designed for innovative interaction benchmarking, it does have some limitations. The games are deliberately minimalistic and lack detailed guides or instructions, necessitating a degree of trial and error for new users. Additionally, agents might be limited in the complexity of the tasks they can handle depending on their design and algorithms, which could affect performance in competitive scenarios.

Several alternatives to ARC-AGI-3 for AI benchmarking include the Arcade Learning Environment (ALE), OpenAI Gym, and DeepMind's Lab. These platforms also offer interactive environments that test various AI capabilities, from simple tasks to more complex problem-solving scenarios. However, each platform has its unique focus and design philosophy, making ARC-AGI-3 stand out for its emphasis on reasoning and adaptability in interactive situations.