Drag Your GAN

Interactively reshape images by dragging points to adjust pose, expression, and layout with high precision.

Vcai.mpi-inf.mpg.deFollow for updates & deals

Get alerts for Drag Your GAN discounts, feature releases & pricing changes

Similar Tools

What is Drag Your GAN?

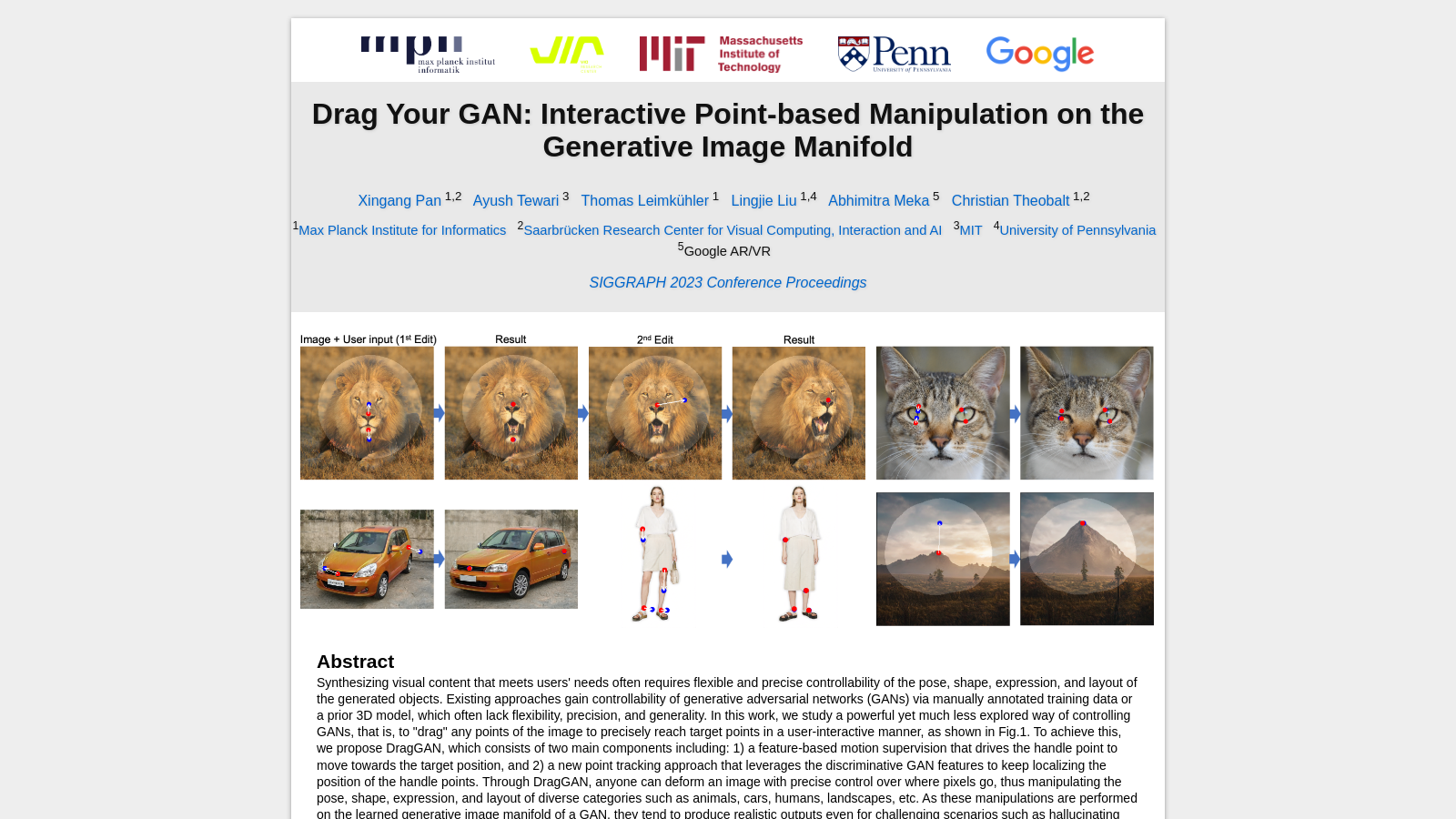

Drag Your GAN, an innovative tool in the world of Generative Adversarial Networks (GANs), emerges as a revolution in image manipulation. While traditional image generation methods often demand manually annotated data or a 3D model, Drag Your GAN embarks on a unique path. It offers users an interactive way to "drag" specific points of an image, ensuring the targeted alignment of these points, an approach briefly represented by the term DragGAN.

At the heart of DragGAN are two primary components. The first focuses on feature-based motion supervision, guiding a handle point to its target position. The second component presents a new point-tracking technique that leverages the discriminative features of the GAN, maintaining a constant update on the location of the handle points. The result? Users have the power to morph images with remarkable precision, tailoring aspects like pose, shape, expression, and layout across a range of categories. Whether it's the fierce visage of a lion or the sleek curves of a car, the tool ensures high-quality, realistic outcomes, even in more intricate tasks like revealing occluded content or consistently following an object's inherent rigidity.

But what sets DragGAN apart from its peers? Beyond its innovative point-based manipulation, it brings unparalleled flexibility, precision, and universality. Previous approaches have shown limitations, often constricted to certain object categories, offering limited control over spatial attributes, or lacking the precision and fluidity required for advanced editing. DragGAN, on the other hand, excels in each of these areas. Users can click on any number of handle and target points on an image, transforming diverse spatial attributes without any constraints related to object categories.

The practicality of DragGAN doesn't end there. The tool's proficiency is powered by a thoughtful design centred around the GAN's feature space. By harnessing this feature space, DragGAN can precisely supervise motion and track points. This translates to rapid image manipulation processes that take seconds on advanced GPUs in real-time applications. This efficiency propels DragGAN into live, interactive editing sessions, where users can seamlessly experiment with different layouts until they achieve their intended output.

In conclusion, Drag Your GAN isn't just another tool in the vast landscape of GANs. It's a breakthrough. DragGAN stands out as a holistic solution for intuitive, point-based image editing by eschewing reliance on domain-specific modelling or additional networks. It leverages the power of a pre-trained GAN to synthesize images that align with user input while preserving realism. Looking ahead, the potential applications of such technology are boundless, from enhancing visual media content to designing hyper-realistic virtual environments. And as the team behind DragGAN contemplates venturing into 3D generative models, we eagerly await the next evolution in this groundbreaking journey of visual manipulation.

Pros & Cons

Pros

- Enables precise image manipulation by dragging points to target locations interactively.

- Demonstrates realistic output, even for complex scenarios like occluded content.

- Utilizes feature-based motion supervision for enhanced control over generative models.

Frequently Asked Questions

Drag Your GAN is available at no cost.

This tool offers a lifetime deal.

With Drag Your GAN, users can manipulate a wide range of image categories, including animals, cars, humans, landscapes, and more. The system enables interactive, point-based manipulation, allowing you to precisely control aspects such as pose, shape, expression, and layout of the generated objects in these categories.

Drag Your GAN utilizes a feature-based motion supervision system, enabling users to drag any points in an image toward target positions. This interactivity is powered by a point tracking approach that utilizes features from the generative adversarial network (GAN) to accurately track the location of these points, enabling precise deformations of the images.

Yes, Drag Your GAN can manipulate authentic images through a process called GAN inversion. This technique enables the system to transform authentic images, applying the same point-based manipulation capabilities to them as it does to generated images, thereby providing flexibility in image editing.

While Drag Your GAN offers advanced manipulation capabilities, users should note that the quality and accuracy of the manipulated images can depend on the complexity of the scene and the underlying GAN model. Furthermore, as a research project, it may not have the stability and support features of commercial software. Therefore, users are encouraged to consult the official documentation for detailed limitations and guidance on usage.

Drag Your GAN is primarily a research tool developed by the Max Planck Institute for Informatics, and it may require specific computational resources for optimal performance. Users should refer to the official website for system requirements and compatibility details, particularly regarding hardware specifications and operating systems suitable for running the tool.

The development of Drag Your GAN is based on advanced research in the field of computer vision and generative models, with a specific focus on the controllability of GANs. The project was presented at the SIGGRAPH 2023 Conference, highlighting its innovative use of point-based manipulation to achieve high-quality image editing results that surpass previous methods.

While the website provides valuable information and documentation on the core features and research behind Drag Your GAN, users seeking in-depth guides or tutorials may need to refer to external resources or community forums for more comprehensive support. Check the official site for any updates on available tutorials or user guides.

Drag Your GAN, being a research project, may not have a dedicated support system like commercial software. However, users can contact the researchers directly through the provided email addresses for questions or clarification. Additionally, checking the project’s official website may yield further insights and updates.