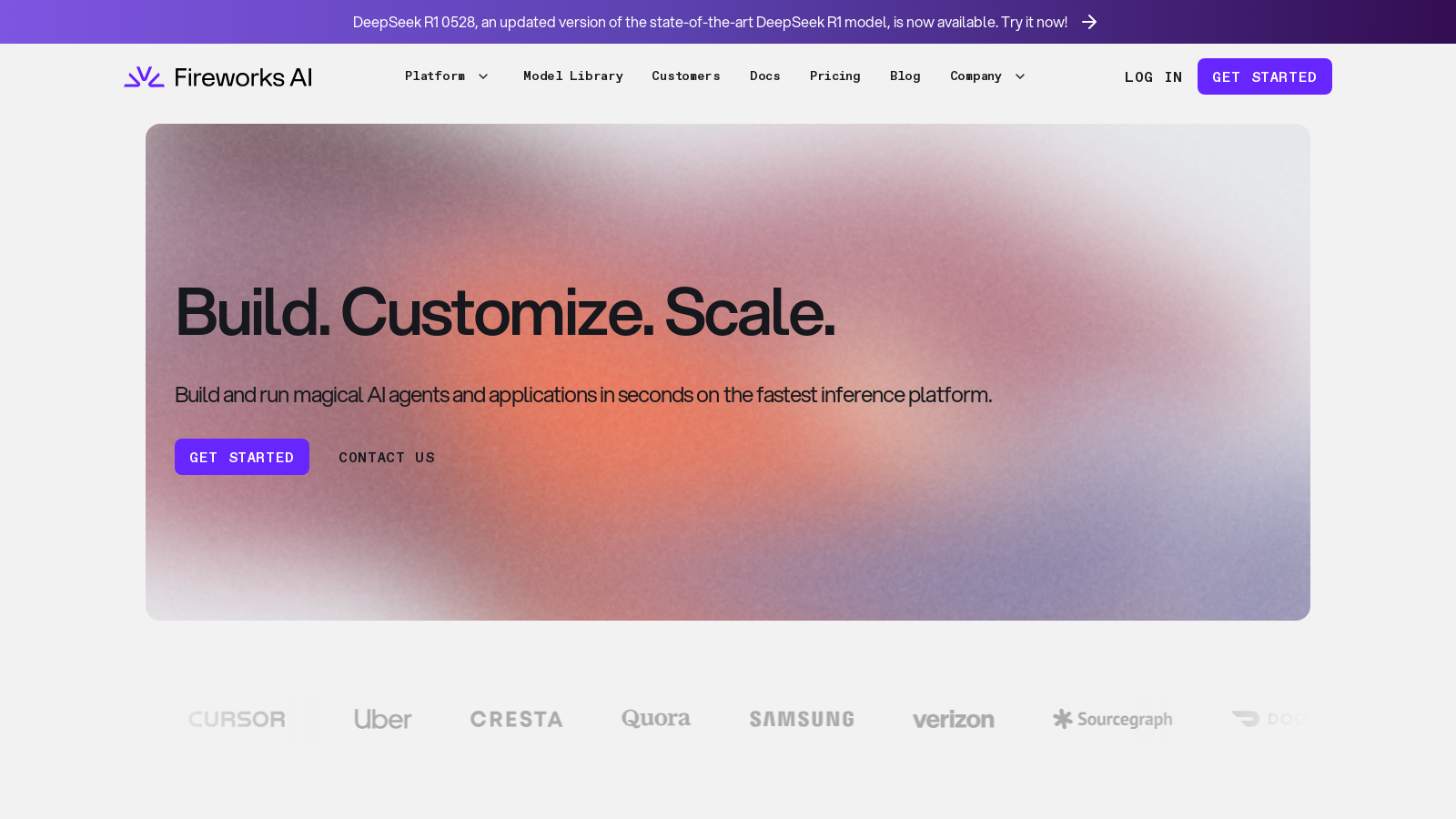

Fireworks

Easily build and customize generative AI applications using open-source models without extensive setup.

Fireworks.aiFollow for updates & deals

Get alerts for Fireworks discounts, feature releases & pricing changes

Similar Tools

What is Fireworks?

Fireworks is an innovative platform designed to help developers build and implement generative AI systems with ease. It focuses on leveraging open-source AI models and provides a customizable environment where users can run and tailor their models with just a few lines of code. From initial experimentation to enterprise-level deployments, Fireworks aims to streamline the process of AI integration into applications.

Getting Started with Fireworks

As a new user, getting started with Fireworks is straightforward. You can quickly sign up for an account to access robust features that enable you to utilize popular open-source models, such as Llama and DeepSeek. The platform promotes a serverless inference model that minimizes setup time, letting you dive straight into building AI applications without the usual headaches associated with GPU configuration.

Features of Fireworks

Fireworks is packed with features that cater to both new developers and seasoned AI professionals:

- Custom Model Implementation: With Fireworks, you can run various pre-configured models without extensive setup. The platform supports rapid experimentation with open AI models, allowing you to deploy and customize them on the fly.

- Blazing Speed and Scalability: Fireworks is built for speed, offering real-time performance with optimized throughput and minimal latency. This makes it ideal for mission-critical applications where performance is paramount.

- Cost-Efficient AI Development: Pay-as-you-go pricing models ensure that you only pay for what you use. Whether it’s for token-based processing or GPU usage, Fireworks provides economical options tailored to different project sizes.

- Enterprise-Level Solutions: For enterprise clients, Fireworks offers flexible deployment options, including on-premises, virtual private cloud (VPC), and cloud solutions. This ensures compliance with SOC 2 Type II, GDPR, and HIPAA regulations.

- Fine-Tuning Capabilities: Users can customize models to fit their specific applications through fine-tuning features better. This includes techniques like reinforcement learning and adaptive speculation to maximize model performance.

Use Cases

The versatility of Fireworks allows it to cater to a range of applications, from code assistants, voice agents, to deep code analysis. Companies such as Sourcegraph and Quora have successfully utilized the platform to enhance their AI-driven tools, showcasing Fireworks' reliability and performance.

Customer Testimonials

Feedback from Fireworks users highlights its strengths:

- Cursor" "Fireworks has been an amazing partner in getting our Fast Apply and Copilot++ models running performantly. They exceeded other competitors we reviewed on performance."

- Quora" "Fireworks is the best platform out there to serve open-source LLMs. We appreciate their infrastructure, which enables us to serve our domain models effectively. "

The community surrounding Fireworks also adds to its value, providing forums and a Discord server for knowledge sharing among developers.

In essence, Fireworks is paving the way for a new era in AI development. With its combination of speed, customization, and cost-effectiveness, it stands out as a premier choice for developers looking to harness the power of generative AI.

Pros & Cons

Pros

- Build and customize AI applications quickly with minimal setup and no GPU required.

- Seamless scaling across multiple clouds enhances availability and performance consistency.

- Real-time inference with low latency and high throughput optimized for enterprise-level applications.

Cons

- Limited details on specific supported model types may confuse new users.

Frequently Asked Questions

We have no pricing information available now, so please check the Fireworks's website.

According to our latest information, this tool does not seem to have a lifetime deal at the moment, unfortunately.

Fireworks offers advanced tuning techniques, including reinforcement learning, quantization-aware tuning, and adaptive speculation. These methods help maximize the quality of results from open models, allowing users to significantly customize their outputs. By leveraging these techniques, developers can achieve improved performance and optimize their models for specific use cases without having to deal with complex setup processes.

Fireworks allows you to run a variety of models across different modalities, including text, audio, image, and embeddings. Users can access popular models, such as DeepSeek, Llama, and Qwen, as well as upload their models within supported architectures. This breadth of model support makes Fireworks ideal for various applications, including generative AI, coding assistants, and more.

Fireworks facilitates seamless scaling by automatically provisioning the latest GPUs across multiple cloud providers and regions. This means you can deploy your applications globally without needing infrastructure management. Whether you opt for serverless, on-demand, or reserved GPU deployments, Fireworks handles the scaling and ensures availability and consistent performance, allowing you to concentrate on developing your applications.

Yes, Fireworks enables users to customize AI models using their data with minimal setup. By utilizing features such as fine-tuning and FireOptimizer, you can quickly adapt open models to meet your specific requirements. This enables the implementation of task-specific optimizations, ensuring the model performs well on your data.

Fireworks provides several resources to support developers, including a cookbook with code examples, tutorials, and guides. There is also a Discord forum for community discussions, troubleshooting, and sharing best practices. Additionally, you can refer to the documentation for detailed instructions or contact their support team directly for personalized assistance.

While Fireworks offers various deployment options, there are some limitations related to rate limits, especially for the serverless model, which has fixed pricing per token. Additionally, the performance and throughput may vary depending on the deployment type and usage patterns. Users should assess their specific needs and select the most suitable deployment strategy to prevent potential bottlenecks.

To get started with Fireworks, you need to sign up for an account on their platform. Once registered, you can generate an API key, which is necessary for making API calls. It's recommended to set up your development environment, and Fireworks provides a straightforward guide for installing the required SDK to integrate their services quickly into your applications.

To optimize your experience with Fireworks' AI models, consider starting with smaller models for low-latency tasks and progressively scaling up to larger models for high-quality outputs. Utilize the advanced tuning features to achieve better performance and consistently monitor system health and workloads for efficient resource management. Additionally ,take advantage of the community resources available to learn from the experiences of ther ddevelopers