Langfuse

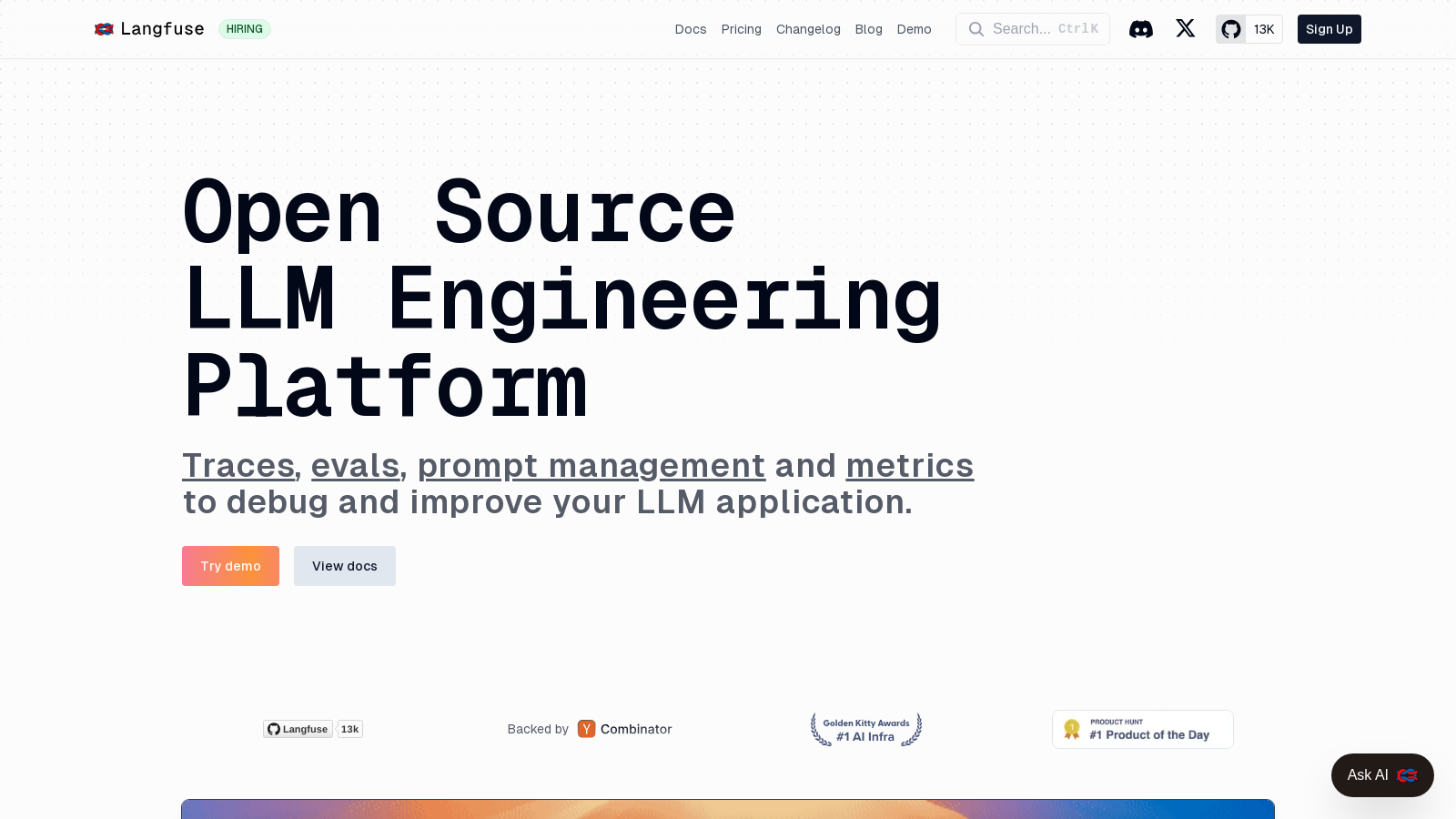

An open-source platform for developing, debugging, and monitoring Large Language Model applications.

Langfuse.comFollow for updates & deals

Get alerts for Langfuse discounts, feature releases & pricing changes

Similar Tools

What is Langfuse?

Langfuse is an open-source platform designed to assist teams in developing, monitoring, and debugging their Large Language Model (LLM) applications efficiently. This comprehensive engineering platform integrates various functionalities, including tracing, prompt management, evaluation, and analytics, significantly enhancing the development workflow. Recently, Langfuse has taken a notable leap by fully embracing an open-source strategy; all product features are now open-sourced under the MIT license. This move not only democratizes access to cutting-edge capabilities for developers worldwide but also fosters community collaboration and feedback.

LLM Tracing

At the core of Langfuse's capabilities is its robust LLM tracing feature. This functionality allows developers to capture detailed production traces of their LLM applications, simplifying the debugging process and making optimizations more straightforward. By recording each LLM call alongside the associated logic, teams can derive vital insights related to performance, latency, and cost. The tracing integration extends well beyond simple logging; it supports frameworks like LangChain and OpenTelemetry, offering robust assistance for multilayered applications, which is vital for production use cases.

Prompt Management

Another standout functionality of Langfuse is its prompt management system, enabling teams to collaboratively manage and version prompts effectively. This ensures optimal deployments of high-performing iterations. Leveraging the Langfuse UI, developers can test and fine-tune prompts in real-time. Furthermore, the recently added dedicated playground facilitates direct testing and comparison of prompts and models, thus streamlining the optimization process across various scenarios.

Evaluation Tools

User feedback is a cornerstone for any successful application, and thus, Langfuse provides dedicated features for this purpose. Users can contribute feedback seamlessly within the application, bolstering the evaluation ecosystem. Newly introduced methods, including LLM-as-a-Judge evaluations and manual annotation workflows, ensure versatile testing capabilities for models and prompts, thus guaranteeing high-quality outputs derived from real user interactions.

Analytics and Metrics

Equipped with a comprehensive suite of metrics, Langfuse enables development teams to effectively monitor essential performance indicators, which include cost, latency, and user satisfaction. Recent enhancements, particularly the launch of a flexible Metrics API, allow users to create tailored reports and dashboards with adjustable dimensions and time granularity. This data-driven approach supports well-informed decision-making processes when it comes to refining applications.

Self-Hosting and Open Source

Langfuse’s commitment to being an open-source platform means it can be self-hosted, granting organizations complete command over their data and infrastructure. This capability is particularly crucial for teams operating in regulated industries, where data privacy is of utmost importance. The entire codebase remains accessible, and with extensive community support, Langfuse is continually refined based on user feedback, ensuring it meets evolving technology demands.

API Integrations

Designed with integration at its forefront, Langfuse provides a wide array of SDKs for both Python and JavaScript, along with seamless integrations for prominent libraries such as LangChain, OpenTelemetry, and many others. This extensive compatibility allows developers a straightforward path to incorporate Langfuse into their existing workflows. With its API-first architecture, every feature is made available via the API, paving the way for effortless custom integrations.

Community and Support

Langfuse boasts a rapidly expanding community, which cultivates a spirit of collaboration and support among developers. Through platforms like GitHub Discussions, users can engage actively, report issues, and work together on feature enhancements. Community support is readily accessible through Discord and GitHub, supplemented by thorough documentation designed to assist newcomers. As the Langfuse ecosystem evolves, user feedback remains integral to its ongoing refinement and alignment with real-world needs.

With its open-source ethos and dedicated community, Langfuse stands as a pivotal player in the LLMOps domain, catering to teams ready to leverage large language models in their operational workflows. As the landscape of AI continues to transform, Langfuse remains committed to leading advancements in LLM engineering and observability.

Pros & Cons

Pros

- Open source status enables self-hosting and community-driven development.

- Comprehensive tracing and observability tools provide deep insights into LLM applications.

- Flexible API allows easy integration with various models and frameworks, enhancing adaptability.

Cons

- Some advanced features require a license and are not included in the open-source version.

- Complex initial setup may be a barrier for less technical users.

Frequently Asked Questions

Langfuse is open source and free to use.

According to our latest information, this tool does not seem to have a lifetime deal at the moment, unfortunately.

Langfuse offers extensive integrations with various popular libraries and platforms, including Langchain, OpenAI, LlamaIndex, LiteLLM, and many more. Additionally, it provides SDKs for both Python and JavaScript/TypeScript, enabling developers to incorporate Langfuse into their existing applications seamlessly. For a complete list of integrations and libraries, refer to the official documentation.

Langfuse provides robust prompt management tools that allow you to version and deploy prompts collaboratively. You can organize prompts in folders, test different versions directly in the Langfuse UI, and optimize them based on user feedback and performance metrics. This feature helps ensure that you're always using the most effective prompts in your LLM applications.

Langfuse includes several evaluation tools that are vital for assessing the quality of LLM applications. You can collect user feedback, use the LLM-as-a-judge feature for evaluations, and annotate results within Langfuse. Additionally, you can run systematic evaluations on datasets to ensure consistent performance, helping you identify issues early on.

To self-host Langfuse, first ensure you have Docker or Kubernetes set up on your infrastructure. Follow the self-hosting guide available on the Langfuse website, which provides step-by-step instructions for deploying Langfuse on your servers. You'll run the same infrastructure that powers Langfuse Cloud, allowing you to manage deployments according to your needs.

Yes, Langfuse provides a powerful, open API that gives you access to all of its features and data. This API allows you to create custom workflows, automate tasks, and integrate Langfuse with other applications or services seamlessly. You can find detailed instructions on how to authenticate and use the API in the documentation.

Langfuse is committed to data privacy and security, with compliance with GDPR and certifications such as SOC 2 Type II and ISO 27001. The platform employs robust encryption, access controls, and regular security audits to protect user data. Additionally, users can choose to self-host Langfuse, maintaining complete control over their data and environment.

Langfuse offers metrics tracking features that allow you to monitor costs, latency, and quality of your LLM applications. You can set custom metrics and dimensions through the Metrics API to gain insights into your usage patterns. This enables you to optimize costs and improve the performance of your applications.

Langfuse offers various support options, including community support through GitHub Discussions and Discord, as well as comprehensive documentation for self-help. For time-sensitive issues, users can reach out through in-app chat or email support. Additionally, users on Pro, Team, or Enterprise plans receive dedicated support via private Slack channels.