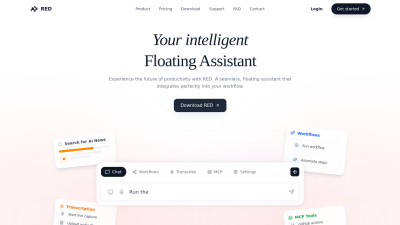

LangSmith

Platform for debugging, monitoring, and evaluating performance of LLM applications.

Langchain.comFollow for updates & deals

Get alerts for LangSmith discounts, feature releases & pricing changes

Similar Tools

What is LangSmith?

LangSmith is a unified observability and evaluation platform that empowers teams to confidently transition their large language model (LLM) applications from prototype to production. Whether utilized within the LangChain ecosystem or as a standalone solution, LangSmith equips teams with the necessary tools to debug, test, and monitor AI application performance effectively. Its robust feature set ensures that AI agents respond accurately and reliably to user interactions.

Debugging and Observability: Debugging LLM applications poses unique challenges due to their inherently non-deterministic nature. LangSmith tackles these issues by providing comprehensive debugging capabilities, which include step-by-step tracing features. Developers can monitor agent activities in real-time under varying conditions. With live dashboards and real-time metrics, teams can swiftly identify performance bottlenecks and malfunctions, receiving timely alerts to facilitate rapid resolution to potential issues.

Performance Evaluation: Enhancing the overall performance of LLM applications is a core strength of LangSmith. The platform empowers developers to evaluate application effectiveness by saving production traces for in-depth analysis. Users also benefit from LLM-as-Judge evaluators, allowing them to assess response quality and gather insights from subject-matter experts on relevance, correctness, and harmfulness. This feedback loop is crucial for enhancing the effectiveness of AI applications and ensuring they meet user needs.

Collaboration and Prompt Engineering: Effective prompt engineering is key in maximizing the capabilities of LLMs. LangSmith fosters collaboration by providing an intuitive workspace for prompt creation, allowing team members to iterate and refine prompts without extensive technical skills. The integrated Prompt Canvas UI enables seamless testing and recommending variations, thereby accelerating the development process in a more engaging collaborative environment.

Business-Centric Monitoring: LangSmith excels in monitoring business-critical metrics that go beyond standard observability. Teams can track essential performance metrics such as costs, latency, and response quality using live dashboards. The ability to receive alerts and analyze root causes equips stakeholders with the insights required to align AI applications with broader business objectives, ensuring valuable outcomes that transcend mere technical functionality.

Deployment Flexibility: One of LangSmith's hallmark features is its seamless integration into existing operational workflows. With an API-first architecture compliant with OpenTelemetry (OTEL), LangSmith can easily fit into DevOps processes. It offers diverse deployment options, including hybrid and self-hosted setups, catering to enterprises that require strict compliance and data governance protocols. Additionally, LangSmith operates without introducing latency into applications, functioning asynchronously to ensure performance remains unaffected.

Continuous Improvement through Evaluation: The evaluation capabilities of LangSmith ensure that applications are regularly verified against real-world data, making it crucial for ongoing optimization. By integrating automatic evaluations and facilitating human feedback through annotation queues, LangSmith allows teams to maintain a high standard of quality and effectiveness across their AI applications.

Conclusion: As AI technologies evolve, tools like LangSmith become essential for guaranteeing the reliability and performance of LLM applications. By serving as an integrated platform for observability, performance evaluation, and collaborative prompt engineering, LangSmith enables development teams to deploy AI agents with confidence, ultimately enhancing user satisfaction and achieving greater business success.

Pros & Cons

Pros

- Offers unified observability and evaluation tools for AI applications.

- Enables quick debugging of non-deterministic LLM behaviors through step-by-step tracing.

- Facilitates collaboration on prompt engineering with an intuitive Prompt Canvas UI.

Frequently Asked Questions

LangSmith is free to start, with paid plans from 0 to 39 USD per month.

According to our latest information, this tool does not seem to have a lifetime deal at the moment, unfortunately.

LangSmith offers a unified platform for debug testing, application performance monitoring, and observability. Key features include tracing capabilities that allow you to see each step of your LLM application's execution, enabling quick identification of failures. You can also evaluate your agents' performance using LLM-as-Judge evaluators, collect human feedback, and track essential business metrics, such as costs, latency, and response quality, through live dashboards.

Yes, LangSmith allows for self-hosting on its enterprise plan. This means you can run LangSmith on your Kubernetes cluster, ensuring that your data remains within your environment and is not accessible externally. Refer to the official documentation for details on setting up the self-hosting environment.

LangSmith helps evaluate performance through the use of 'traces,' which comprise all inputs and outputs throughout your application's execution. You can save production traces for analysis, automatically score performance using LLM-as-Judge evaluators, and gather feedback from subject-matter experts to assess relevance, correctness, and harmfulness.

Base traces have a shorter retention period of 14 days and are suited for quick debugging, costing ?.50 per 1,000 traces. In contrast, extended traces are retained for 400 days and offer greater utility for ongoing improvement and model tuning, costing ?.00 per 1,000 traces. LangSmith enables you to upgrade base traces to extended ones when needed, effectively balancing cost and value.

To get started with LangSmith, you can sign up for a free account on their platform. After creating an account, follow the documentation available on their website to integrate LangSmith into your application, enabling tracing, evaluations, and prompt engineering features. You'll find step-by-step guides to help you through the initial setup.

LangSmith is designed to be framework-agnostic. You can integrate it with applications built on various programming languages and frameworks, such as Python and TypeScript. By using a standard OpenTelemetry client, you can log traces, run evaluations, and implement prompt engineering, making it versatile for developers working with diverse tech stacks.

No, LangSmith is designed not to add latency to your application. The SDK uses an asynchronous process to send traces to a collector without impacting application response times. In the event of an issue with LangSmith, your application's performance remains unaffected, allowing for seamless operation while you monitor and debug the problem.

LangSmith provides a comprehensive set of resources, including an introduction guide, best practice eBooks, and video tutorials. Additionally, LangChain Academy offers courses specifically focused on using LangSmith effectively, including training on observability and performance evaluation. You can also access community forums for ongoing support and collaboration.