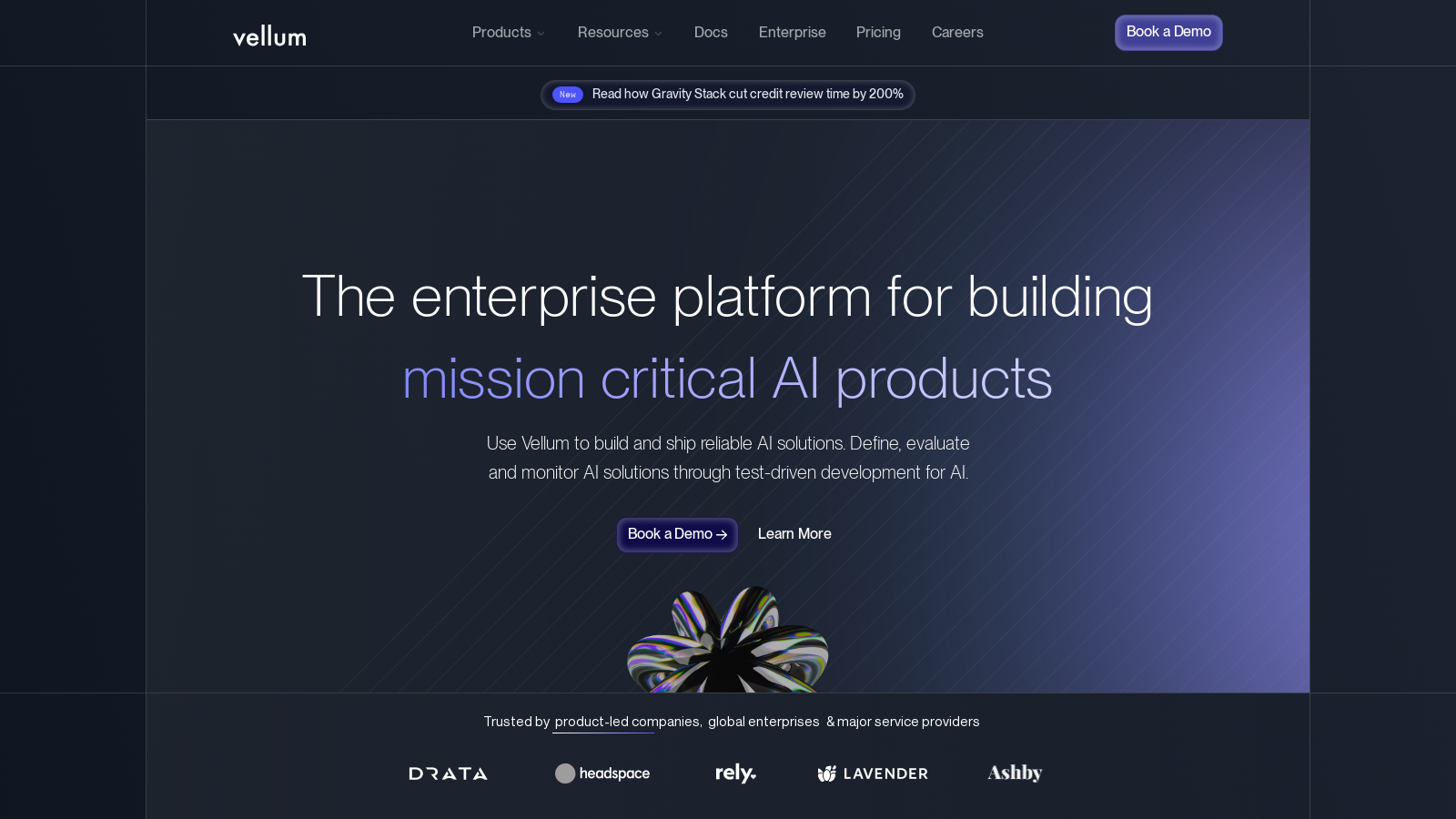

Vellum

Facilitates AI development, testing, and deployment with a focus on collaboration and iteration.

Vellum.aiFollow for updates & deals

Get alerts for Vellum discounts, feature releases & pricing changes

Similar Tools

What is Vellum?

Vellum is a robust and innovative platform designed to streamline the development, testing, and deployment of AI solutions. With a strong emphasis on collaborative, test-driven development, Vellum empowers teams to create efficient and reliable AI applications quickly and effectively. This tool is particularly intriguing as organizations strive to enhance their workflows with AI.

Key Features:

- AI Development Lifecycle: Vellum supports the entire AI development lifecycle, facilitating everything from prompt engineering to evaluations, deployments, and continuous monitoring.

- Multiple Workspaces: Tailored for enterprise functionality, Vellum allows teams to collaborate across various workspaces while ensuring clarity and control over access permissions.

- Customization: Users can extensively tailor their AI solutions, incorporating features such as role-based access control and external monitoring integrations to meet specific business needs.

- Integrations: The platform seamlessly interfaces with popular AI models and diverse data sources, simplifying the complexities inherent in AI product development.

- Real-time Monitoring: Vellum enables users to monitor AI systems in production dynamically, furnishing essential insights into performance and usage, which supports ongoing refinement and improvement of the models.

AI Solutions Made Simple:

This platform is dedicated to alleviating the complexities of AI development, providing user-friendly tools suitable for both technical engineers and business stakeholders. The intuitive interface features a comprehensive SDK accommodating various programming languages, allowing for a smoother development process.

Prompt Engineering:

- Vellum's powerful prompt engineering capabilities empower developers to design dynamic prompts catered to specific user scenarios, bolstering the reliability and relevance of AI responses.

Deployment and Monitoring:

One of Vellum’s standout features is its capability to decouple AI updates from application releases, thus facilitating thorough evaluations and debriefing capabilities. Users can fine-tune their models based on sophisticated metrics and real-time feedback, ensuring optimal performance without unnecessary downtime.

Collaboration:

Vellum significantly enhances team collaboration, integrating functionalities that allow for effective versioning and tracking of changes. This ensures that all stakeholders, whether technical or non-technical, can engage seamlessly in the development process, contributing to the overall success of their projects.

Moreover, Vellum is built to strengthen enterprise-level security and compliance, adhering to standards such as SOC 2 Type II and HIPAA legislation. This dedication to security and ethical data management instills confidence among clients as they develop and deploy critical AI applications.

Created to facilitate faster review cycles in operational tasks, Vellum provides essential tools for organizations seeking to innovate their AI solutions and achieve substantial operational efficiency. From startups to large enterprises, Vellum stands ready to be a crucial partner in the advancement of AI technology.

To experience transformative AI development firsthand, connect with Vellum and explore how this powerful platform can elevate your organization's AI deployment capabilities.

Pros & Cons

Pros

- Supports test-driven development for building and monitoring AI solutions.

- Features a low-code interface for rapid deployment and iteration of AI workflows.

- Allows integration of multimodal inputs like images and documents for enhanced AI capabilities.

Cons

- Complex functionality may require a steep learning curve for new users.

Frequently Asked Questions

Vellum is free to start, with paid plans from 0 to 50 USD per month.

According to our latest information, this tool does not seem to have a lifetime deal at the moment, unfortunately.

To integrate custom models into Vellum, you can add both private and public models via the Models tab. Private models can be created outside of Vellum and connected through a seamless onboarding flow. Vellum currently supports various private model templates, including OpenAI models hosted on Azure and fine-tuned models, as well as other custom models. Public models may also require the addition of an API key for access. For more specifics, visit the Models page in Vellum's documentation.

Vellum facilitates experimentation through its user-friendly interface, which allows engineers, product managers, and subject matter experts to iterate on AI business logic collaboratively. Users can define input variables, create scenarios, and adjust prompts for testing. The workflow development IDE also enables the creation of complex AI systems with direct access to outputs, promoting swift iterations of business logic and enhancing product refinement.

Vellum offers comprehensive monitoring capabilities, including a monitoring dashboard that provides real-time insights into performance, usage metrics, and execution history. Features like trace and graph views help identify edge cases, while configurable data retention policies ensure compliance and effective data governance. Users can also integrate external monitoring solutions for an enhanced view of their AI applications in production.

Yes, Vellum supports multimodal inputs, allowing users to incorporate both images and documents into prompts and workflows. For photos, users can easily drag and drop files into prompt scenarios. Documents such as PDFs can also be uploaded for analysis and extraction tasks. This feature is handy for applications that require complex data interpretation, thereby enhancing the overall capabilities of AI models integrated into Vellum.

Practical strategies for prompt engineering in Vellum include using Rich Text Blocks for simple variable substitutions and Jinja Blocks for more complex conditional logic. Users can leverage scenarios to experiment with dynamic inputs, making it easier to refine prompts for desired outputs. Additionally, utilizing prompt caching can reduce costs and improve response times by reusing frequently requested prompt components.

Yes, Vellum imposes limits on document size and format. Uploaded documents (like PDFs) should be under 32MB and must be publicly accessible. Supported image formats include JPEG, PNG, GIF, and WEBP. The use of these formats ensures efficient processing and compliance with Vellum's capabilities for handling multimodal inputs effectively.

Enterprise clients using Vellum can access a range of enhanced support options, including role-based access control, multiple workspaces, and dedicated AI expert assistance. Additionally, Vellum provides single sign-on (SSO) integration, customizable contracts, and robust SLAs to meet the unique needs of larger organizations, ensuring a scalable and secure environment for AI development.

Vellum streamlines the deployment process by decoupling AI updates from application releases, allowing for seamless updates. Its native release management features allow for version control, logging of invocations, and monitoring of execution history. Users can deploy workflows directly from the platform and make iterative modifications easily, ensuring a smooth and efficient deployment cycle for their AI applications.