LLMs.txt Generator

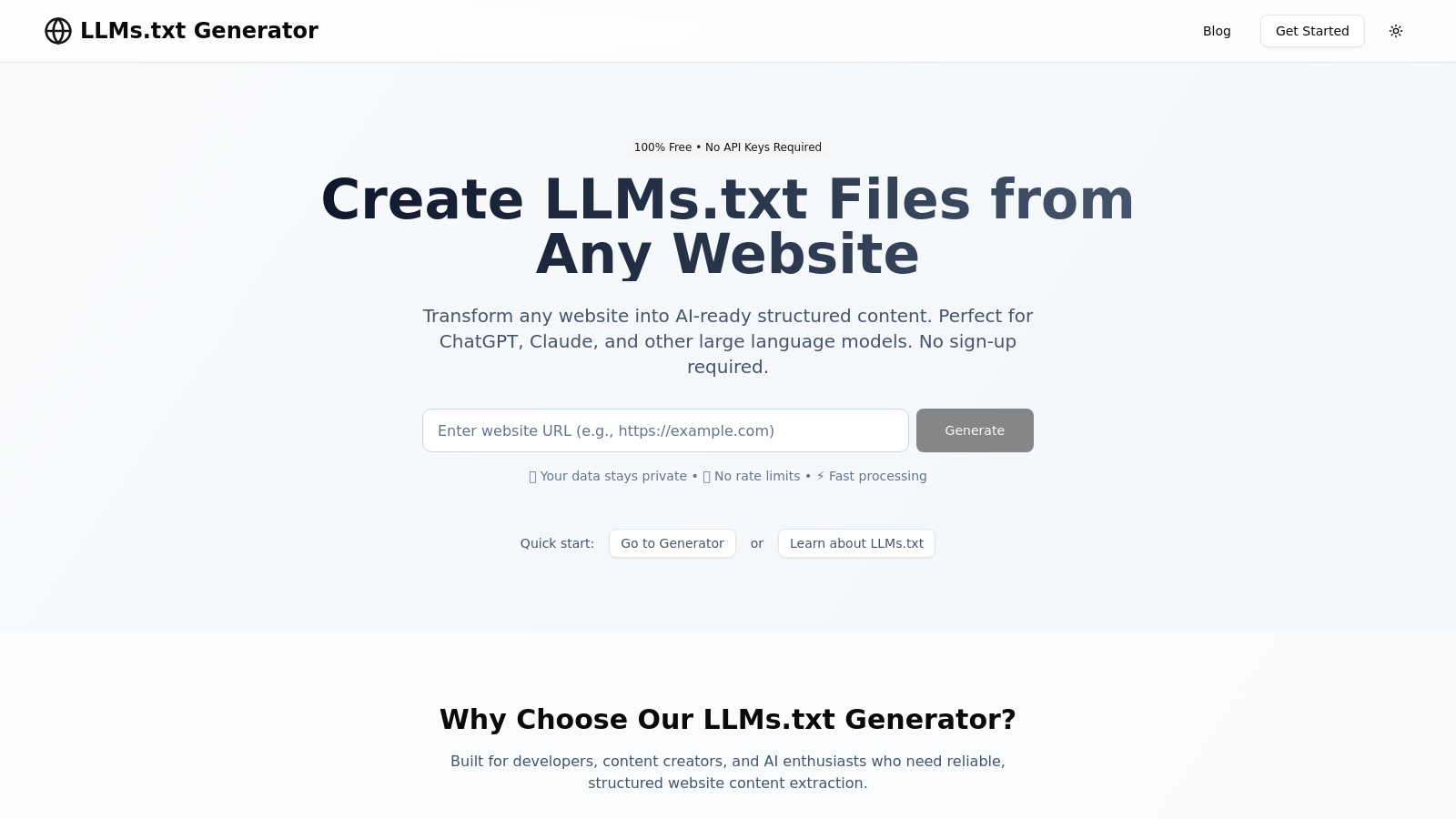

Convert website content into structured LLMs.txt files optimized for AI consumption.

Llmstxtgenerator.ccFollow for updates & deals

Get alerts for LLMs.txt Generator discounts, feature releases & pricing changes

Similar Tools

What is LLMs.txt Generator?

The LLMs.txt Generator is a free web-based tool designed specifically for developers, content creators, and AI enthusiasts looking to convert website content into a structured format optimized for AI consumption. By transforming traditional web pages into LLMs.txt files, users can ensure their site’s crucial data is easily accessible for large language models like ChatGPT and Claude.

Why Use LLMs.txt? In today’s digital landscape, AI tools require a clear representation of content to function effectively. The traditional web pages are often cluttered with navigation menus, ads, and other elements that distract from the core message. LLMs.txt resolves this issue by providing a clean and streamlined output that focuses solely on the important information, while respecting robots.txt and privacy standards.

Getting Started with LLMs.txt

Using the LLMs.txt Generator is simple and straightforward. There are three essential steps to follow:

- Enter Your URL: Simply paste the website URL that you want to convert. The tool checks accessibility and permissions automatically.

- Configure Your Options: Customize the crawling depth, content filters, and the output format according to your needs. You can choose how much content to include, defining options such as summary or full content to fit the intended use.

- Download Your Results: After processing, get your formatted LLMs.txt file that you can use with any AI model.

Key Features of the LLMs.txt Generator

1. Fast Processing: The generator quickly processes websites without overwhelming their servers, ensuring a smooth user experience.

2. Privacy First: The tool collects no data, ensuring all user information remains private and secure.

3. No Rate Limits: Users can generate LLMs.txt files without concerns about limitations, making this tool suitable for high-volume needs.

4. AI Optimization: The structured output generated is specifically designed to align with the requirements of AI models, ensuring compatibility and ease of use.

The Importance of Ethical Web Scraping

LLMs.txt Generator is built on the principles of ethical scraping. It adheres to website policies and respects barriers set by the robots.txt file. This ethical approach helps ensure that website owners are treated fairly and that their resources are not exploited.

Furthermore, users are encouraged to comply with legal considerations when scraping sites, which include understanding copyright laws and respecting the privacy of individuals. The generator allows users to focus on high-quality data extraction without infringing on the rights of content creators.

Continuous Improvement and Community Feedback

The LLMs.txt Generator is an open-source project driven by community engagement. User feedback plays a crucial role in the development of features and improvements. This collaborative approach helps the tool evolve and meet the real-world needs of its users in the AI development ecosystem.

Whether you're a developer seeking to enhance AI applications, or a content creator aiming to optimize your articles for AI-driven tools, the LLMs.txt Generator is the go-to solution for effective AI content preparation.

Conclusion

By offering a free, accessible tool designed specifically for creating LLMs.txt files, the LLMs.txt Generator empowers users to transform their website content into AI-ready structures. The tool is user-friendly, respects privacy, and is tailored to meet the demands of modern AI applications.

Pros & Cons

Pros

- Transforms any website into AI-ready structured content without requiring API keys.

- Respects robots.txt and incorporates ethical crawling practices for data extraction.

- Offers customizable options for crawling depth, content filtering, and output formats.

Frequently Asked Questions

LLMs.txt Generator is available at no cost.

According to our latest information, this tool does not seem to have a lifetime deal at the moment, unfortunately.

The LLMs.txt Generator is designed to transform various types of website content into an AI-ready format. You can optimize e-commerce product descriptions, documentation, blog posts, community forum discussions, and corporate information into structured content. This format ensures that AI models like ChatGPT and Claude can understand and utilize your content effectively.

LLMs.txt Generator adheres to ethical web scraping practices by respecting the 'robots.txt' file of the target website. This means that it checks for permissions before crawling, ensures compliance with guidelines for automated access, and incorporates rate limiting to prevent overwhelming servers. This commitment ensures that your scraping activities are respectful and compliant with site policies.

When generating LLMs.txt files, users can customize several parameters. You can specify the crawling depth (shallow, medium, or deep), maximum number of pages to crawl (between 1 and 100), and select the output format (full text, summary, or custom). Additionally, you can use filter options to include or exclude specific content, ensuring that the generated file meets your particular needs.

If your LLMs.txt file is missing content, first ensure that the website has substantial text information. You can adjust the content filters, such as minimum word counts or exclude patterns, and attempt to regenerate the file. It's also recommended to review the website structure and verify that relevant content is not behind any login or block that prevents automated access.

Yes, the LLMs.txt Generator is well-suited for large websites. It can handle multiple pages efficiently by allowing you to set the maximum pages to crawl. For frequently updated sites, consider setting up regular regeneration of the LLMs.txt file to keep the content fresh. You can automate this process with batching or scheduled tasks for optimal results.

Yes, it's essential to understand the legal aspects associated with web scraping. Always review a website's terms of service to ensure compliance. Be mindful of copyright laws, privacy regulations, and the implications of data protection laws (like GDPR) when scraping personal data. Implementing proper content attribution and respectful usage of the scraped data is crucial.

To optimize your LLMs.txt files, focus on generating content that is clean and structured. Use precise, hierarchical categorization with relevant headings. Avoid including navigation, advertisements, or redundant content. Regularly review and update your files, and consider testing how different AI models interact with your content to refine the generation process continuously.

After generating your LLMs.txt file, you should upload it to your website's root directory and verify its accessibility by entering the file URL directly in a web browser. Ensure it is publicly accessible without restrictions from robots.txt or other measures. Testing with various AI platforms can also help confirm its readability and effectiveness for AI consumption.