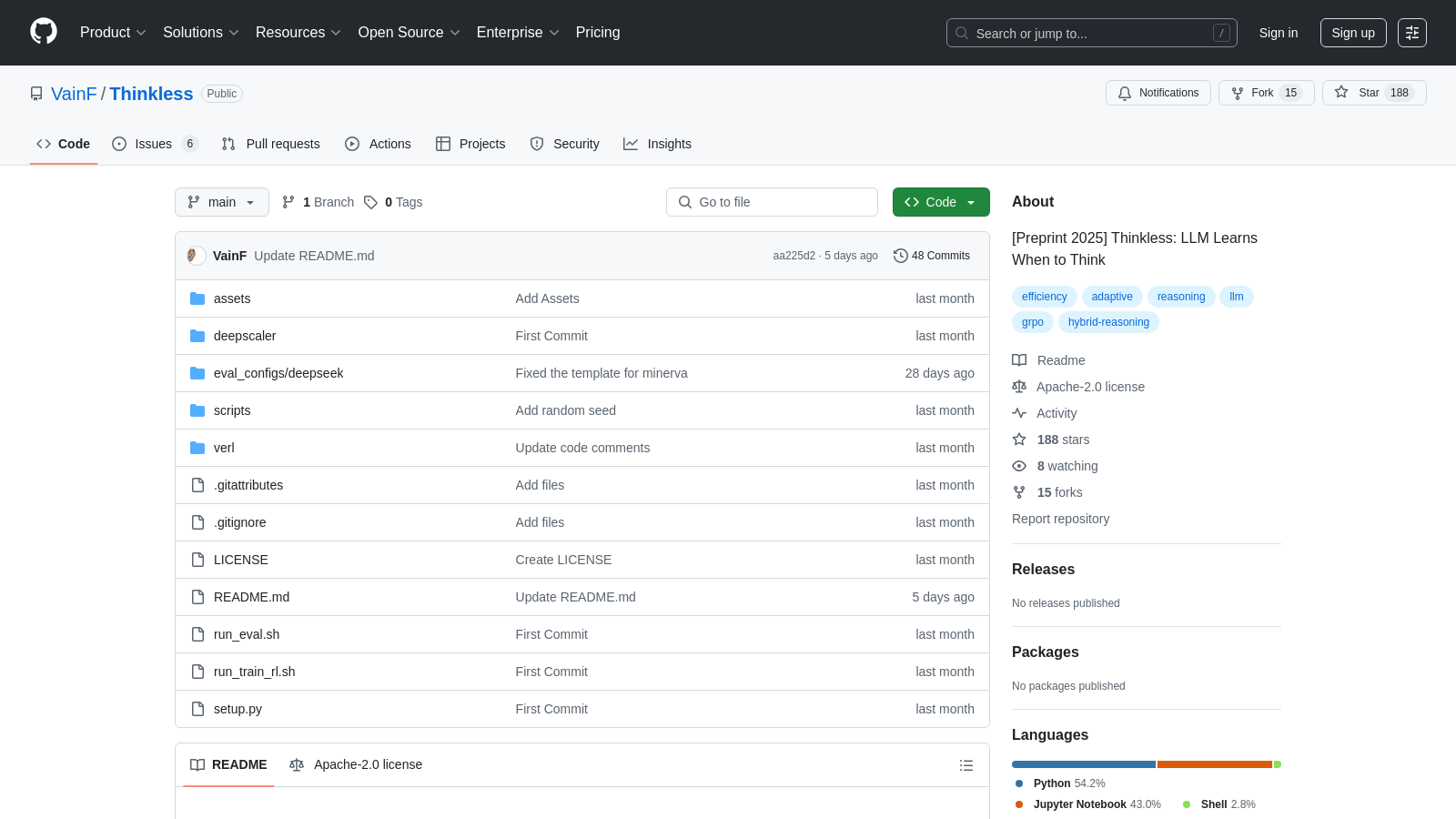

Thinkless

Framework for LLMs to optimize reasoning by choosing response complexity based on task demands.

Github.comFollow for updates & deals

Get alerts for Thinkless discounts, feature releases & pricing changes

Similar Tools

What is Thinkless?

Thinkless is an innovative framework designed for large language models (LLMs) to learn when to think critically before generating responses. By utilizing a unique training paradigm based on reinforcement learning, Thinkless aims to optimize reasoning in large language models (LLMs), enabling them to choose between short and long-form responses based on the complexity of tasks. Recently, significant enhancements have been made to the framework, solidifying its standing as a leading solution for adaptive reasoning in LLMs.

The core innovation of Thinkless lies in its sophisticated use of the Decoupled Group Relative Policy Optimization (DeGRPO) algorithm. This advanced approach strategically separates the learning objectives into two distinct components: one oversees the choice of reasoning mode through a control token loss, while the other boosts the accuracy of generated answers via response loss. This meticulous separation not only stabilizes the training process but also minimizes the computational load associated with LLM reasoning, facilitating more efficient operations. Moreover, improvements in the algorithm have been made to enhance its ability to stabilize training and prevent the performance collapse that is often seen in naive implementations of similar methods.

How It Works

The heart of the Thinkless framework is composed of two key control tokens:

Key Features

- Adaptive Reasoning: Thinkless adjusts its response generation according to the task complexity and the model's capabilities, making it highly versatile.

- Enhanced Efficiency: The framework significantly cuts down on the need for extensive reasoning paths, which correlates with performance improvements across various benchmark tests, reducing long-chain thinking requirements by 50-90%.

- Reinforcement Learning Approach: Thinkless employs a reinforcement learning framework that not only fosters a better understanding of task complexity but also predicts when deeper reasoning is imperative.

- Empirical Results: The framework's latest iterations have demonstrated superior results in empirical tests, further validating its innovative approach to LLM training and reasoning.

Installation and Usage

Setting up Thinkless is uncomplicated and can be executed directly within a conda environment. The installation process encompasses setting up Python dependencies, downloading relevant model components from the official repository, and utilizing a simple command-line process to initiate training. Users are guided through the setup with detailed documentation found within the repository, enhancing the onboarding experience while eliminating setup ambiguities.

Conclusion

In summary, Thinkless embodies a forward-thinking tool that redefines how LLMs interface with intricate reasoning tasks, significantly advancing computational efficiency and response accuracy. By leveraging its innovative design and practical applications in real-world scenarios, Thinkless stands as a crucial resource for both researchers and practitioners in the rapidly evolving field of artificial intelligence. The engagement in its ongoing development ensures that it not only meets current demands but adapts to future needs in the realm of large language models and advanced reasoning.

Pros & Cons

Pros

- Employs adaptive reasoning to improve efficiency in task performance.

- Utilizes a unique reinforcement learning model with dual control tokens.

- Significantly reduces long-chain reasoning usage, enhancing computation speed.

Frequently Asked Questions

Thinkless is open source and free to use.

According to our latest information, this tool does not seem to have a lifetime deal at the moment, unfortunately.

The DeGRPO algorithm is at the core of the Thinkless framework. It decomposes the learning objective of hybrid reasoning into two separate components: a control token loss and a response loss. This separation allows for fine-grained control over the contributions of each objective during training. The control token loss determines how the model selects between short-form and long-form reasoning, while the response loss enhances the accuracy of the generated answers. By stabilizing training and preventing collapse, DeGRPO significantly improves performance on various reasoning benchmarks.

Thinkless enhances computational efficiency by enabling language models to adaptively select between short-form and long-form reasoning, depending on task complexity and the model's capabilities. By reducing the necessity for long-chain thinking by 50% to 90%, Thinkless minimizes resource consumption during inference while maintaining or even improving the accuracy of outcomes. This makes it more efficient than traditional reasoning approaches in large language models.

To install Thinkless, you need to create an environment with Python 3.10 and the necessary dependencies. Specifically, use Conda to create a new environment and install packages such as PyTorch, LM_eval, and Ray. For CUDA support, ensure that you install the corresponding version of NVIDIA CUDA. Detailed installation commands are provided in the project's README on GitHub. Ensure you consult the documentation for any additional requirements based on your system setup.

Yes, Thinkless is designed to integrate with popular machine learning frameworks, such as PyTorch, as indicated by the installation of the torch package as a dependency. Since it is built using standard tools, users can interface it with other libraries and frameworks for tasks such as data processing and additional model training. Users can refer to the installation and usage instructions in the GitHub repository to gain a better understanding of the integration.

To quickly start with Thinkless, you'll first need to set up your programming environment with the required Python version and libraries. After activating your Conda environment, you can import the AutoModelForCausalLM and AutoTokenizer from the transformers library. From there, load the Thinkless model and prepare your input prompts for reasoning. The project's documentation includes example code snippets to guide you through generating responses and effectively evaluating model outputs.

You can evaluate the Thinkless model's performance using the evaluation scripts provided in the repository, which allow you to run multiple inference repetitions. This will help gather results for different tasks and metrics. The evaluation tool is based on prompts in OpenAI/simple-evals, and you can run evaluation commands to generate metrics from the results saved in calcs, such as accuracy and response quality, which aid in understanding the model's capabilities.

While Thinkless significantly improves efficiency in reasoning tasks, potential limitations include a dependency on the initial model quality and the quality of the training data. The algorithm may also not perform optimally on specific, highly complex reasoning tasks that require a deep understanding of context. Additionally, fine-tuning hyperparameters such as thinkless_alpha and correct_think_reward may require experimentation to achieve the best results, which can be time-consuming.

For fine-tuning Thinkless, you might start by adjusting the hyperparameters, such as thinkless_alpha and correct_think_reward. If convergence is slow or if the model biases towards a particular reasoning mode, consider incrementally increasing these parameters to improve performance. Experimenting with different training datasets and techniques outlined in the project's documentation can also help optimize performance based on your specific use case.